Zero-Downtime VPN Load Balancing: Algorithms, Health Checks, and Scaling in 2026

Content of the article

- Why you need vpn load balancing and why it matters in 2026

- Architectural patterns for vpn load balancing: from l4 to anycast

- Load balancing algorithms: from simple to advanced

- Health checks and monitoring: checking tunnel quality, not just port status

- Session persistence for vpn: how to "stick" clients properly

- Vpn scaling: vertical, horizontal, and «smart» autoscaling

- Security and reliability: ddos, zero trust, and compliance

- Practical scenarios and templates for openvpn, wireguard, and ipsec

- Metrics, slos, and economics: how to know it’s working

- Common mistakes and anti-patterns: where teams often trip up

- Step-by-step plan to implement vpn load balancing

- Real-world cases: what worked and what didn’t

- Pre-production checklist: did we miss anything?

- Faq: common questions about vpn load balancing

Why You Need VPN Load Balancing and Why It Matters in 2026

VPN Has Evolved from Just Remote Access to a Mission-Critical Network

Just a few years ago, VPNs were mostly associated with remote work and internal system access. Today, that’s changed. In 2026, VPNs serve as the backbone for Zero Trust architectures, hybrid clouds, development environments, gateways to AI clusters, and edge locations. Downtime that used to be inconvenient now disrupts CI pipelines, breaks transactions, and impacts your NPS even with just a minute lost. Load balancing for VPN servers is no longer a luxury—it’s a foundational pillar of your network reliability.

Why has the load increased? We’re witnessing real-time video support traffic, IoT telemetry, and data sync for LLM inference at the edge. And VPNs aren’t just TCP anymore: WireGuard and IPsec run over UDP, demanding a different approach to health checks, NAT forwarding, and session persistence. We’re living fast and scaling smoothly—or at least trying to.

The key takeaway is simple: without smart request distribution across VPN nodes, you’ll hit CPU, EPOLL, socket, and state table limits. Think of the load balancer as your VPN’s conductor, keeping the pipes in tune and pacing the traffic through peak loads.

Common Signs of Overload You’ve Already Encountered

Most of us have seen this: user ping times jump around and throughput drops during traffic spikes. CPU on some nodes spikes to 90-100%, while others sit idle. Users complain about constant reconnections, WireGuard peers disappear for minutes, and OpenVPN logins time out during TLS handshakes. This is what happens when one node drags the whole caravan and the rest are just waiting.

Add to that UDP DDoS attacks, sudden surges after a release or start of workday, and shifting routes in multi-cloud environments. Without a solid load balancing architecture, you lose SLAs and money. The frustrating part? Many of these issues can be fixed not with new hardware, but with smarter algorithms and health checks—essentially free but timely and precise.

What’s Changed by 2026: New Trends and Realities

DPUs and SmartNICs, eBPF/XDP offloads, Anycast+BGP at the edge, cross-region L4 load balancers handling millions of packets per second, and autoscaling based on real session metrics have all arrived. We’re increasingly using Maglev and ring-consistent hashing, building sticky sessions for UDP based on the 5-tuple or peer ID, and leveraging session resumption for TLS, IPsec, and IKEv2. Post-quantum algorithms are now in pilot stages—cryptography just got heavier. Everyone wants "scale with minimalist design": fewer hacks, more predictability, and automation.

Architectural Patterns for VPN Load Balancing: From L4 to Anycast

Classic Setup: L4 Load Balancers in Front of VPN Pools

A straightforward approach is placing an L4 load balancer before a pool of VPN servers to distribute incoming connections. This works well for OpenVPN (TCP or UDP), WireGuard (UDP), and IPsec/IKEv2 (UDP ports 500/4500). Pros: centralized control, health checks, and easy autoscaling. Cons: a possible single point of failure and the need for proper sticky sessions, especially for UDP.

Common tools include HAProxy, Nginx Stream, Envoy (L4/L7), as well as commercial and cloud-native NLBs with direct passthrough. The key is ensuring symmetric routing and preserving "flow affinity" on a single node. TCP is simpler with stable sessions, while for UDP we hash source/destination to avoid breaking tunnels. At scale, ECMP with fine-tuning is common.

Critical environments favor Active-Active balancer pairs using VRRP/keepalived or built-in HA features. Out-of-band monitoring is essential too, checking not just port liveliness but true tunnel health.

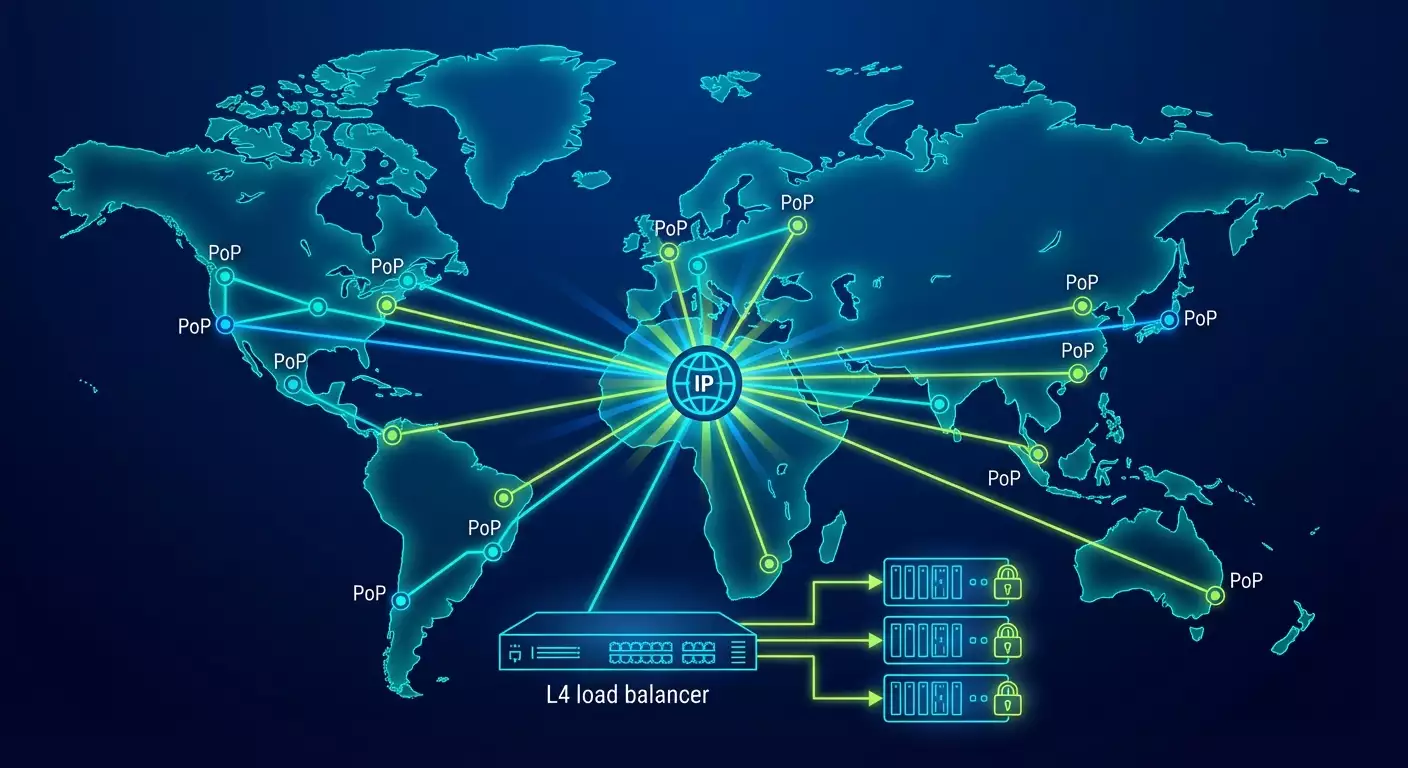

Anycast+BGP at the Edge for Global Points of Presence

If you operate multiple regions and PoPs, Anycast works near magic. The same IP is announced from various sites via BGP, routing users to the nearest PoP based on provider routes. Benefits include minimal latency and out-of-the-box geographic distribution. Inside a PoP, traffic is distributed among L4 or DPU load balancers. This brings user experience close to CDN levels.

Anycast demands careful route flapping control, accurate prepends and community tags for priority, and most importantly, clear failover mechanisms. If a PoP goes down, routes must withdraw swiftly. We have cases with convergence times under 30 seconds during outages and less than 5 seconds failover on internal L4 health failures—the users barely notice.

Direct Server Return and Bypassing Extra Copies

For maximum throughput, techniques like Direct Server Return (DSR) are used. In this model, incoming traffic passes through the load balancer, while outbound packets go directly from servers to clients, bypassing the balancer. This reduces pressure on load balancers but complicates networking. In VPN scenarios, DSR is rare due to session state and encryption needs, but for high-performance IPsec gateways in data centers, it’s viable if routing symmetry is guaranteed.

Keep in mind: DSR makes debugging harder and tunnel diagnostics must remain transparent. If your team isn’t equipped for this complexity, stick with classic L4 setups that scale well and provide clear metrics.

Load Balancing Algorithms: From Simple to Advanced

Round Robin, Weighted RR, and Why They Don’t Cut It Alone

Round Robin is fast and simple but ignores real-world differences: nodes vary in power and load isn’t uniform. Weighted Round Robin helps if you assign weights based on CPU, cores, or encryption acceleration. However, VPN traffic is unstable—some clients push gigabits, while tens of thousands barely use bandwidth. Connection count doesn’t equal traffic volume. You need a smarter approach.

In practice, Weighted RR is a good baseline, enhanced with telemetry and dynamic weight adjustments: for example, reducing a node’s weight by 20% at 75% CPU, 50% at 85%, and so on. This adaptive tuning isn’t perfect but helps smooth peak loads.

Least Connections / Least Load and Adaptive Metrics

Least Connections fits TCP VPNs (like OpenVPN-TCP), where active connection counts track load. For UDP tunnels (WireGuard, IPsec), we monitor active peers and PPS/BPS rates. A new metric called "effective sessions" weighs clients by recent actual traffic volume over the last N seconds. The load balancer assigns new flows to nodes with the lowest "effective" load—hence "Least Load."

By 2026, this is the norm: when a new peer connects, the node is selected based on CPU, IRQ softnet, PPS, BPS, drop/queue metrics, and active Security Associations (IPsec) or peers (WireGuard). Data is gathered via eBPF, Netlink, and Prometheus exporters, updating every 1-3 seconds. Be mindful of metric noise and use exponential smoothing to filter fluctuations.

Consistent Hashing, Maglev, and UDP Stickiness

The main headache with UDP is no TCP-style sessions, but tunnel state exists. You need sticky distribution so clients consistently hit the same node, avoiding tunnel resets, packet loss, and frustration. Techniques include consistent hashing on the 5-tuple, source IP, or a unique client ID. Maglev hash and ring-consistent hashing maintain stable client-to-node assignments even when servers are added or removed.

Pro tip: Clients behind CGNAT may have varying source IPs, so use a more stable key—like the client certificate CN (OpenVPN), WireGuard peer’s public key, or identity from RADIUS/AAA. The balancer needs a stable entity; otherwise, stickiness will degrade and tunnels will jump nodes on every reconnect.

Health Checks and Monitoring: Checking Tunnel Quality, Not Just Port Status

Passive and Active Checks

Basic TCP/UDP port checks are only a start. We do active checks: OpenVPN/TLS handshake verification, IPsec IKE_SA status, direct tunnel interface pings, and WireGuard test packets. A solid health check verifies not just daemon presence but successful test peer creation and encrypted packet exchange. Yes, it’s more complex, but this catches "alive-but-useless" processes with broken routing.

Add passive telemetry: packet drop rates, qdisc queue growth, crypto errors, handshake failure spikes, and latency tail (P95/P99). If P99 exceeds 200 ms for a regional PoP, that’s a warning. Drop rates over 0.1%—also a warning. Your thresholds should match your SLOs. By 2026, this is standard practice.

Multi-Tier Checks and Decision Mesh

A single health check is weak protection. We build cascades: fast L4 pings every 2 seconds, extended functional tests every 10-20 seconds, and synthetic transactions (like brief client-connect emulation) every minute. Node removal from the pool is based on the majority of signals to avoid false positives from flaky sensors. Node returns follow the same cascade with hysteresis.

Also, external probes from independent vantage points—agents from different ASNs test Anycast node availability and measure real latency. This safeguards against "all green inside" scenarios where users suffer due to provider issues or MTU problems. We had a case where route alternation and ECMP dropped some traffic, but internal PoP metrics stayed perfect. Only external checks revealed the truth.

False Positives and Debouncing

Errors happen. UDP packets get lost, CPU spikes briefly, GC pauses process. Use debouncing: N consecutive failures, observation windows, exponential backoff intervals. Always log not just failures but context—what metrics went out of bounds, node load at the time, any config changes. Post-incident diagnostics are your free upgrade.

Session Persistence for VPN: How to "Stick" Clients Properly

Sticky Based on 5-Tuple, Source IP, and User ID

The basic approach is stickiness on the 5-tuple: src IP, src port, dst IP, dst port, protocol. This works well for TCP. For UDP, where source ports may change, rely on more stable keys. For OpenVPN, use client CN from certificates or RADIUS username; for WireGuard, the peer public key; for IKEv2, IDi/IDr or EAP identifiers. Ideally, the load balancer reads these early in the handshake or works alongside a controller that provides the mapping.

The more stable your key, the fewer migrations—and the happier your users. No one likes tunnel flickering, even if reconnects only take seconds. We measured: moving a peer to another node during config updates raises P99 latency by 30-60% for 1-2 minutes. Good sticky and drain modes fix this.

Drain Mode, Graceful Reload, and Rolling Updates

Your updates shouldn’t hurt users. Before deploying a new build or config, put the node into drain: it stops accepting new clients but continues serving current sessions. Within 5-15 minutes (depending on tunnel timeouts and activity), most sessions naturally shift elsewhere. Then perform a graceful restart—a minimal break, or better, zero-downtime worker process rotation. Only then return the node to the pool.

WireGuard is known for simplicity, but recreating interfaces can reset peer states. Update carefully: atomically apply configs, stage new rules in advance, then switch. OpenVPN? Keep sessions alive using TLS session resumption and avoid forced key rotations during peaks.

State Sharing and Session Directory

Where do you store state if migration is needed? Some architectures use a session directory—a central cache mapping client to node accessible by load balancers and control layers. It doesn’t replicate encryption state but guides proper handshake direction. For IPsec, this means syncing State Associations between active nodes. In 2026, products and open-source tools exist that partially replicate SA or quickly restore it during failover. Full replication isn’t always necessary; often a quick handshake retry with correct redirect suffices.

VPN Scaling: Vertical, Horizontal, and «Smart» Autoscaling

Vertical Scaling: When It Makes Sense

Powerful CPU cores, AES-NI, ChaCha20-Poly1305, and DPU acceleration speed up VPN. Vertical upgrades patch capacity gaps fast but hit ceilings: costs rise, efficiency drops, and single failures hurt more. For small setups (up to 2-3 Gbps), vertical scaling is cost-effective. Beyond that, parallelism is smarter.

How to know you’ve hit the limit? If a node handles 10-12 Gbps WireGuard with 70-80% CPU and IRQ metrics maxing out, it’s time to scale horizontally. Turn on NUMA-friendly tuning, IRQ pinning, RSS, increase net.core.rmem/wmem, align MTU, then expand sideways.

Horizontal Scaling, Clusters, and Anycast

Horizontal scaling is your friend. Add more nodes, the load balancer spreads peers, Anycast routes users to the closest PoP. The success secret: minimal addition cost—automatic bootstrap, GitOps configs, readiness checks, and pool inclusion. Draining nodes for removal is just as straightforward.

In multi-clouds, we see setups with regional cloud NLBs in front of VPN VM pools, layered config managers, external monitoring, and Anycast IPs announced across regions. It works smoothly as long as health checks are accurate and user-ID stickiness holds.

Autoscaling on the Right Signals

Autoscaling on CPU alone is crude. In 2026, better signals are "effective sessions," PPS/BPS, P95/99 latency, handshake failure spikes, and drop rates. Simple thresholds: if P95 latency climbs 30% sustained over 3 minutes and PPS per node exceeds X, spin up a new node. When load drops consistently for 15 minutes, drain one node. To avoid thrashing, apply min/max limits and cooldown periods.

Heads up: cost matters. Each new node costs money. So factor in economics—if load is seasonal (e.g., 9-11 AM), keep a “hot standby” 10-15% above average. You pay in stability and less stress, because spikes settle down.

Security and Reliability: DDoS, Zero Trust, and Compliance

Protection from UDP Floods and L7 Specifics

VPNs frequently run over UDP, a favorite DDoS target. Use rate filtering at the edge, upstream provider prefilters, eBPF-based handshake packet rate limiting, and conntrack tuning. On the balancer, enable flood protection—preferably whitelisted by AS or geography if business permits. Don’t forget handshake cookies or puzzles in commercial solutions to increase attack cost for adversaries and lower it for you.

At L7 for OpenVPN/TLS and IPsec/IKEv2, use strict cipher suites, disable deprecated algorithms, enforce Perfect Forward Secrecy, renew certificates timely, and leverage HSMs/DPUs where it makes sense. No "temporarily re-enabled MD5"—ever.

Zero Trust and Segmentation

VPN without segmentation is like a master key. In 2026 that’s outdated. Use policy-based routing, device posture checks, short-lived tokens, and identity binding via IdP and MFA. Segment by routes, ACLs, and even PoP geography. Load balancers must understand policy scopes and which nodes serve which user groups. This boosts security, performance, and cost control.

Compliance and Logging

VPN logs are legally significant in many industries. Store connection metadata, failure reasons, client versions, crypto parameters. Anonymize where required and follow data residency laws. If Anycast routes users to other regions, ensure the policy permits it. Load balancing must not break compliance.

Practical Scenarios and Templates for OpenVPN, WireGuard, and IPsec

WireGuard: Fast UDP and High Stickiness Requirements

WireGuard loves minimalism and speed. For load balancing, use L4 with consistent hashing on the peer’s public key. At the edge — Anycast IP for the PoP; inside PoP — NLB/HAProxy/Envoy with ring-hash. Health checks include test peers, keepalive exchange monitoring, and handshake tracking. Autoscaling based on PPS/BPS and active peers. Updates go through drain mode and atomic config applies. We maintained 60,000 concurrent peers at P99 latency under 120 ms across three regions.

Tuning: net.core.rmem_max, rmem_default, busy_poll on interfaces, proper NIC offloads, IRQ pinning to cores. ChaCha20-Poly1305 cipher speeds up CPU-bound workloads. Security: limit new peer acceptance during attacks, rate-limit handshakes.

OpenVPN: TCP/UDP and Flexibility

OpenVPN remains a versatile option. For TCP, load balancing is easier: Least Connections plus session resumption, sticky on TLS sessions. For UDP, sticky on certificate CN or username to prevent reconnection bouncing. Health checks include trial TLS handshakes, tunnel ping tests, and renegotiation logging. Large deployments separate control and data planes, sharding users into groups.

Case study: a fintech company with 1 million MAUs and peaks of 85,000 simultaneous sessions switched from static RR to adaptive Least Load based on CPU, PPS, and P95 latency metrics. Added drain mode on releases, cut reconnect incidents by 42%, and reduced P99 latency by 35% in evenings. All without new hardware.

IPsec/IKEv2: Reliable and a Bit Heavier

IPsec is solid for site-to-site and enterprise mobile. Load balancing uses Anycast at the perimeter followed by L4 with consistent hashing on the IKE identity (IDi) or EAP login. Proper SA sync during failover is essential. If full replication isn’t available, ensure fast rekey with correct redirect. Health checks involve test SAs and ESP packet drop monitoring. Don’t forget NAT-T on UDP port 4500 and large MTU/fragmentation handling. SmartNIC/DPU offloads greatly improve performance.

Metrics, SLOs, and Economics: How to Know It’s Working

Node and Cluster-Level Metrics

Don’t just watch CPU and memory. Critical metrics include incoming/outgoing PPS/BPS, active sessions/peers, handshake rate, drop/queue over interfaces, latency at P50/P95/P99, crypto error rate, TCP retransmits, UDP fragmentation. Balancer internals: algorithm distribution, sticky reassignments percentage, health-fail reaction times. These help spot anomalies early.

At the cluster level, track load uniformity: coefficient of variation (CV) across nodes. CV over 0.25 for a sustained period means algorithm issues or "heavy hitter" clients. Enforce per-client or group quotas to limit peak PPS and prevent one client from spoiling the experience for everyone.

SLOs and Alerts without Overreaction

Set clear SLOs: Anycast PoP availability at 99.95% monthly, P99 latency under 150 ms regionally, reconnection rate below 1.5% per hour per 10k sessions, handshake error under 0.4%. Trigger alerts only after thresholds exceed for N minutes, with storm suppression during spikes. Reports don’t just show red lights but also offer actionable advice: add nodes, enable drain, check ASN routing, tune MTU.

Cost and Capacity Planning

Budget drives decisions. Plan capacity around real "heavy" traffic windows. Analyze historical data: what share of load is from the top 1% of clients? If too high, apply rate limiting. Maintain 20% spare capacity per PoP and 10% globally. Tie cloud autoscaling budgets so services don’t unexpectedly spike by 200% overnight from metric bugs.

Common Mistakes and Anti-Patterns: Where Teams Often Trip Up

Balancing by Connections Instead of Load

The classic trap: counting sessions and celebrating. Result? One node bears several "elephant" flows, others get "mice," but session counts are equal. The fix is PPS/BPS metrics and Least Load algorithm with heavy flow quotas.

Health Check Just Checks Port

A port can "be live" while the tunnel is down. Add functional checks, synthetic transactions, and external probes. Use hysteresis and cascaded decisions to avoid flip-flopping on temporary failures.

No Drain Mode or Graceful Updates

When you roll back releases, users flood reconnects and SLAs tank. Don’t be a hero. Hit drain mode, wait for sessions to drain naturally, update, then return node to the pool. Easy and smooth.

Step-by-Step Plan to Implement VPN Load Balancing

Step 1. Measure, Don’t Guess

Collect metrics: PPS/BPS, peers, handshake rate, latency, drops. Build time profiles and spot peaks. Without data, you're guessing.

Step 2. Choose Your Architecture

For small to mid-scale: L4 load balancer before pool, user ID stickiness, health checks with test peers. For global scale: Anycast+BGP at the edge, internal NLB/HAProxy/Envoy, autoscaling, and external AS vantage probes.

Step 3. Configure Algorithms and Stickiness

Start with Least Load and consistent hashing on a stable identifier (CN, public key, IDi). Check distribution fluctuations when adding/removing nodes. Set quotas for "elephants" and graceful migration on drain.

Step 4. Health Checks and Decision Cascade

Implement fast L4 checkers, slower functional tests, and external synthetic testing. Define thresholds, observation windows, and return policies. Document everything—you’ll thank yourself after the first incident.

Step 5. Autoscale and Manage Economy

Tie autoscaling to quality (P95, drops) and load (PPS/BPS) metrics. Set min/max node counts, budgets, and cooldowns. Test "connection storms" and UDP attacks in staging.

Step 6. Security and Compliance

Review crypto policies, logs, and segmentation. Enable handshake rate limits, perimeter filters, verify MTU. Consider SmartNIC/DPU if cost-justified.

Real-World Cases: What Worked and What Didn’t

Case 1: Fintech and Evening Peaks

The client’s evening surge: mobile analytics over VPN with up to 85k concurrent sessions. Switched from Weighted RR to Least Load, stickiness by CN, added drain mode, and layered health checks in three tiers. Result: 35% reduction in p99 latency at peak, 42% fewer reconnects, and saved two nodes by smoothing loads.

Case 2: Anycast and Multi-Cloud

Global SaaS with PoPs in six regions. Anycast routed users to nearest PoP; inside, NLB used ring-hash on WireGuard public keys. External monitoring quickly disabled degraded regions within 20-30 seconds via BGP changes and fast health checks. Achieved 99.97% uptime quarterly.

Case 3: DDoS and Handshake Flood Mitigation

A UDP flood on WireGuard ports triggered handshake storms. Activated eBPF rate limiting, handshake cookies, and raised upstream filters. Temporarily throttled client handshake retries. P95 latency normalized in 7 minutes; business barely noticed a tiny hiccup lasting five minutes.

Pre-Production Checklist: Did We Miss Anything?

Technical

- Load algorithm: Least Load + consistent hashing

- Sticky sessions on stable client IDs

- Health check cascade: fast, functional, external probes

- Drain mode and graceful updates

- Autoscaling on P95/PPS/BPS with budget limits

- Logging, alerts, SLOs, and post-mortems

Network

- Anycast+BGP for global PoPs

- Proper MTU and fragmentation handling

- ECMP symmetry and route diagnostics

- Perimeter filters and handshake rate limiting

Organizational

- Documentation and runbooks

- Incident drills and connection storms

- Regional compliance alignment

- Five-minute rollback plans

FAQ: Common Questions About VPN Load Balancing

Which Algorithm Should I Start With?

Start with Least Load and consistent hashing keyed by a stable client ID. It delivers good load balance and session stability without connection shifts when nodes change. Save Round Robin for test labs.

Do We Need Anycast If We’re In One Country?

If you have multiple regions within a country and geographically distributed users, Anycast cuts latency and boosts PoP resilience. For a single city and single PoP, benefits are minimal—focus on solid L4 and health checks instead.

How Do I Make WireGuard Sticky?

Use consistent hashing based on the peer’s public key. This is more stable than source IP, especially behind CGNAT. Keep a mapping of peer to node at the balancer or controller so new handshakes don’t shift servers.

What Should I Check Besides Port in Health Checks?

Verify handshakes, test packet exchanges in tunnels, P95/P99 latency, drop rates, crypto errors, and queue growth. External probes from different ASNs are a must to detect provider outages.

How Can I Update Without Downtime?

Enable drain mode, wait for sessions to gracefully finish, then do a graceful or zero-downtime restart. For WireGuard, apply configs atomically; for OpenVPN, rely on session resumption; for IPsec, use fast rekey and proper redirects.

Is It Worth Investing in DPU/SmartNIC?

If your nodes handle tens of gigabits and low latency under load is critical, DPUs and SmartNICs pay off. Smaller installs benefit more from well-configured L4 balancers, autoscaling, and network stack tuning.

What SLOs Should I Set at the Start?

Aim for 99.9-99.95% availability per PoP, P99 latency under 150 ms regionally, reconnection rates below 2% per hour for 10k sessions, and handshake errors under 0.5%. Tighten these as your maturity grows.